Content of the article

- /01 What Is social media content moderation

- /02 What a moderation strategy consists of

- /03 Moderation formats

- /04 How to engage with your audience on different platforms

- /05 How to handle negativity, spam, and fake accounts

- /06 When moderation becomes part of crisis communication

- /07 How to measure moderation effectiveness

- /08 Practical tips for a brand launching moderation

Social media has long since ceased to be just a platform for personal posts. Here, customers ask questions of business page owners, leave reviews, share experiences, compare brands, and expect a quick response. That is why content moderation today is part not only of communication but also of service, reputation management, and customer experience.

According to the Sprout Social Index study, 73% of consumers expect a response from a brand on social media within 24 hours or even sooner. In other words, the speed and quality of engagement directly impact audience loyalty and brand perception.

In this article, we’ll explore how to build an effective moderation strategy, what processes need to be implemented, how to handle negative comments, spam, and crisis situations, and which metrics can help evaluate the effectiveness of your social media engagement.

What Is social media content moderation

Content moderation is the systematic management of audience interaction with a brand on social media. It covers all touchpoints where users can comment, ask questions, or share their opinions:

- comments under posts and ads;

- private messages;

- mentions;

- reviews;

- tags on photos and videos.

Moderation goes far beyond simply deleting unwanted comments. Its main goals are to foster constructive dialogue, protect the company’s reputation, and create a comfortable space for communication. It is through effective moderation that a brand demonstrates that it truly listens to its audience, responds to inquiries, and is open to communication.

Additionally, moderation serves as a tool for quickly identifying issues. For example, if similar complaints about delivery, payment, or product quality appear simultaneously in the comments, this is a signal for the SMM specialist, customer support, the sales department, or the operations team. In such cases, social media acts as a kind of early warning system.

It is important to understand that moderation is not censorship. Constructive criticism, questions, or even customer dissatisfaction should not be deleted simply because they are negative. On the contrary, a professional response to such feedback often benefits the brand far more than dozens of positive comments.

Only posts that violate community guidelines should be removed: spam, insults, fraud, hate speech, or blatant provocations.

That is why modern moderation is an integral part of customer service and helps not only maintain order but also build long-term relationships with the audience.

What a moderation strategy consists of

A moderation strategy is based on internal rules and the team’s operational logic.

- Clear community guidelines. The company must define what content is allowed and what requires removal, hiding, or reporting. This applies to spam, insults, fraudulent messages, aggressive advertising, and other undesirable actions.

- A consistent tone and response format. The brand chooses its communication style in advance: formal, friendly, neutral, or more personalized. It is important not only to define the tone but also to maintain it across all channels.

- Response scripts for typical situations. The team must understand how to act in the event of a complaint, a request for help, negative feedback, a fake account, or a public conflict. This simplifies the work and helps respond without unnecessary emotion.

- Scope of responsibility. Not all inquiries are handled by the moderator. Some requests are forwarded to support, PR, management, or another department. A clear division of roles makes communication more manageable.

- Escalation rules. For complex or high-risk situations, a separate algorithm for forwarding inquiries is needed. This is especially important when there is a threat of reputational damage or a response needs to be coordinated quickly.

- Work schedule and rhythm. Moderation must align with workload, time of day, and the brand’s social media activity. Without this approach, some messages may get lost in the general flow.

Remember! A well-thought-out strategy transforms moderation from a set of ad-hoc actions into a full-fledged business process.

Moderation formats

The next step is to define the moderation format itself. It shows how deeply the team is involved in working with the audience and what tasks it takes on in daily communication.

Full moderation

This means the team controls the entire flow of interaction: comments, mentions, private messages, replies under content, and basic filtering of unwanted messages. This format is suitable for brands that are active on social media, receive a high volume of inquiries, or operate in niches where reputational risks are particularly sensitive.

Partial moderation

Involves monitoring only specific channels or types of interaction. For example, a brand may closely monitor comments under promotional posts but not process every mention or respond to all posts outside of primary sales channels. This is a convenient format for companies where part of the communication is already handled by customer support or a dedicated SMM specialist.

Standardized moderation

Based on pre-written scripts and canned responses. It works well where there are many inquiries, and some of them are repetitive. This format allows you to quickly answer typical questions, maintain a consistent style, and avoid wasting extra time on identical situations.

Personalized moderation

This approach allows for a more flexible response to specific contexts. Here, the response is crafted not just based on a template, but by taking into account the user’s tone, the content of the message, and the surrounding context. This approach is particularly important for brands that value attention to detail and a warm, human style of communication.

In practice, brands rarely use just one format. More often, a combination works: basic inquiries are handled in a standardized way, while complex or emotional situations are addressed in a personalized manner.

How to engage with your audience on different platforms

Moderation on social media always depends on the platform. What works in one place won’t necessarily have the same effect on another social network. That’s why it’s important to consider both general rules and the specific features of each channel in your strategy.

Instagram most often requires careful management of comments under posts, Stories, and promotional content. Here, the audience expects a quick response and a natural tone, especially if the brand actively engages with its community. For Instagram, it’s important to maintain a balance between friendliness and professionalism, without turning replies into dry, formal responses.

Facebook is better suited for more detailed communication, especially when it comes to discussions, inquiries, or public customer requests. Here, the moderator often has to handle not only comments but also reviews, reactions to ads, and messages on the business page. Because of this, Facebook usually requires more systematic moderation and clearer filtering rules.

TikTok operates in a highly dynamic environment where content can quickly gain reach, and comments can just as quickly become part of the general information noise. For a brand, this means that moderation here must be particularly responsive. It is important to respond to and control the tone of communication under videos, as a single well-chosen or ill-advised comment can significantly impact brand perception.

X requires special attention due to its high visibility and the speed at which reactions spread. Here, communication often takes place in the form of instant public replies, so moderation must be as composed as possible. In such an environment, it is especially important not to lose consistency, not to get into emotional arguments, and to clearly understand the boundaries of what is acceptable.

The platform influences the format, pace, and depth of the response. That is why a one-size-fits-all approach to moderation usually works worse than a model tailored to each social network.

A brand that takes these differences into account achieves not just order in the comments, but higher-quality engagement with its audience.

How to handle negativity, spam, and fake accounts

In moderation, it’s important not to treat all «uncomfortable» messages the same. Constructive criticism, spam, fake accounts, and provocations require different response strategies. For a brand, this means first understanding the nature of the message and then deciding on a course of action. On Meta, this is done via, which allows you to hide and block comments, as well as apply profanity filters and block certain words; on TikTok, you can filter unwanted comments and keywords; and on X, you can use anti-spam checks, content visibility restrictions, and other tools to enforce platform rules.

If a comment contains substantive criticism, the best approach is a calm response without a defensive tone, followed by a step toward resolving the situation. Publicly handling the situation appropriately often works better in such cases than trying to quickly delete the message. When it comes to spam or fraudulent posts, communication is no longer necessary, as filtering, hiding, or reporting are the appropriate actions. That is why TikTok allows you to hide comments that the system identifies as spam or potentially offensive, while Facebook lets you block specific words and automatically hide their variations in comments.

Fake accounts and provocations should be viewed as a way to protect the brand’s space, not as a reason for discussion. X separately describes mechanisms to counter inauthentic behavior and spam, and also provides the ability to report fake accounts and suspicious activity directly through its built-in reporting system.

This clearly illustrates the underlying logic: where there is no real dialogue, moderation should function as a filter and visibility control, rather than as an endless explanation.

When moderation becomes part of crisis communication

There are situations where moderation ceases to be a matter of handling individual comments and becomes a tool for managing the reputation landscape. This happens when negative sentiment spreads rapidly, a single topic generates a large number of posts, or a brand faces a public wave of discontent. In such cases, it is important not only to respond but also to control what exactly remains visible to the audience.

Crisis moderation operates at a different pace. Here, a simple, quick response from a moderator is no longer sufficient, as decisions often involve PR, customer support, or department leadership. This involves more rigorous text approval, stricter oversight of public responses, and a dedicated process for complex cases.

It is in this sense that moderation becomes part of crisis communication: it dampens the noise and helps maintain control at a time when the audience is reacting particularly strongly.

On TikTok and Facebook, the crisis response plan relies on built-in comment filtering and visibility control tools. TikTok allows you to hide filtered comments and, during live streams, review and approve them manually. Facebook, in turn, provides separate moderation settings for comments on pages and in ad campaigns. This is important because, especially in fast-paced crisis situations, the technical aspects of moderation often matter just as much as the text of the response itself.

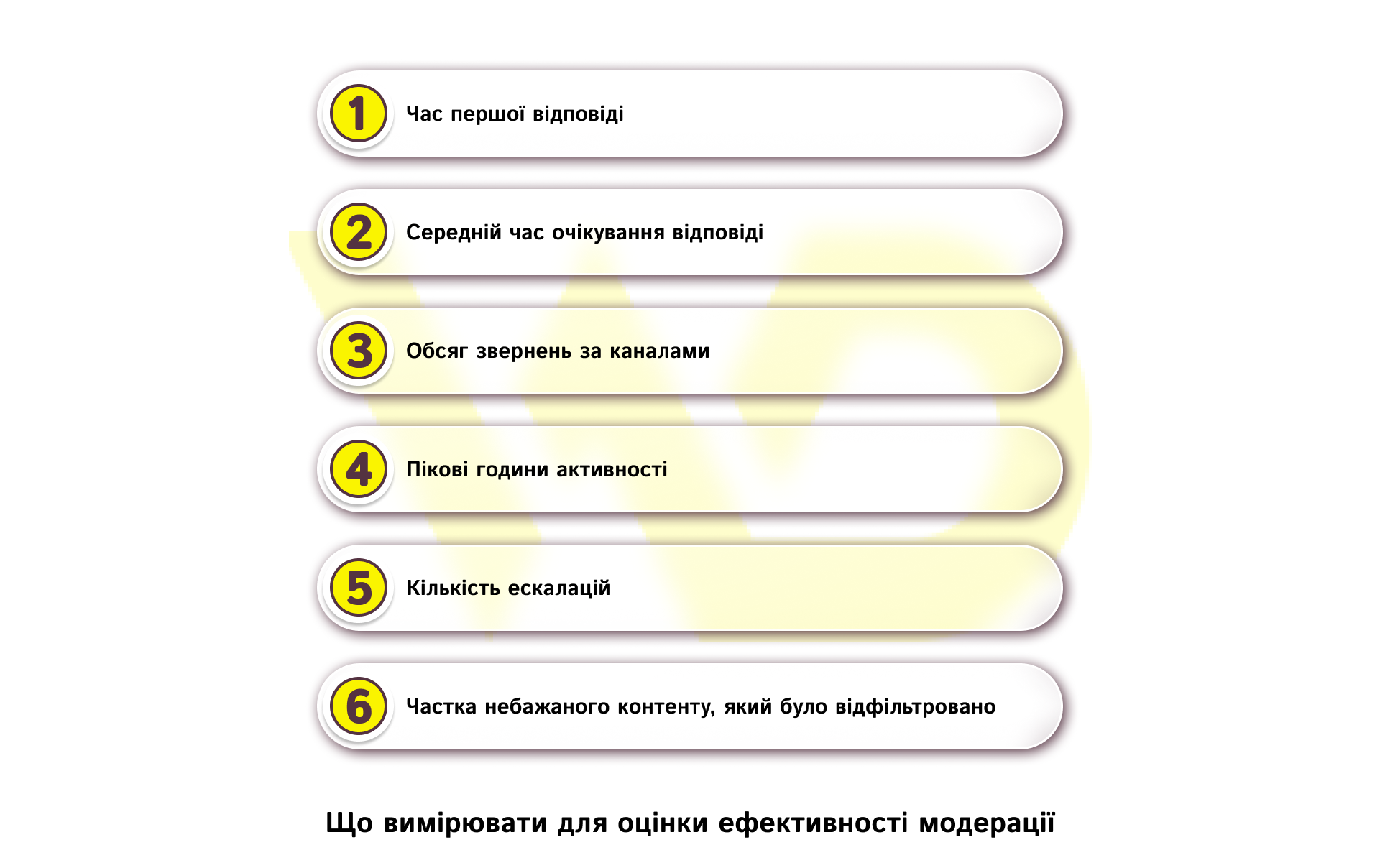

How to measure moderation effectiveness

Effective moderation should be evident not only in the quality of communication but also in the numbers. Sprout Social recommends focusing first on first response time, average response time, the volume of inquiries by channel, and peak hours. This allows you to assess response speed and how the team handles the flow of requests during different periods.

First response time

Shows how quickly the brand responds to a user’s first inquiry. For example, if a person left a request in the comments in the evening and the response didn’t appear until the next day, this already affects the perception of the service. This metric is particularly important for social media, as the audience expects a quick response.

Average response time

Helps you see how much time a user spends in a dialogue with the brand. This is no longer just about the initial response, but about the entire communication process. Therefore, if the team responded quickly but then makes the person wait several hours for clarification, the overall impression of the service still deteriorates.

Volume of inquiries by channel

Provides insight into where moderation is under the most strain. For example, on Facebook, there may be more inquiries in comments under ads, while on Instagram, they may be in direct messages. If this distribution isn’t analyzed, the team may underestimate the actual workload on individual channels.

Peak activity hours

Show which time periods require the most attention from moderation. If the main flow of comments occurs in the evening or on weekends, and the team only works during standard business hours, some inquiries will inevitably go unanswered. That’s why it’s important to align the moderation schedule with the audience’s actual behavior.

Number of escalations

Shows how many inquiries the moderator cannot resolve independently and must escalate. For example, if delivery complaints regularly go to support and reputation cases go to PR, that’s normal. But if there are too many escalations, it’s a sign that response protocols or lines of responsibility need to be reviewed.

Percentage of unwanted content that was filtered

Helps assess how effectively the platform’s built-in tools are working. For example, if the system automatically hides most spam comments before the audience sees them, this significantly reduces the workload on moderators and lowers reputational risks. If the metric remains low, it’s worth reviewing the filter settings or expanding the list of keywords and triggers.

For moderation to truly work effectively, it should be evaluated regularly, in conjunction with the team’s workload, response speed, and the quality of escalation. The best results come not from a single metric, but from the combination of all of them.

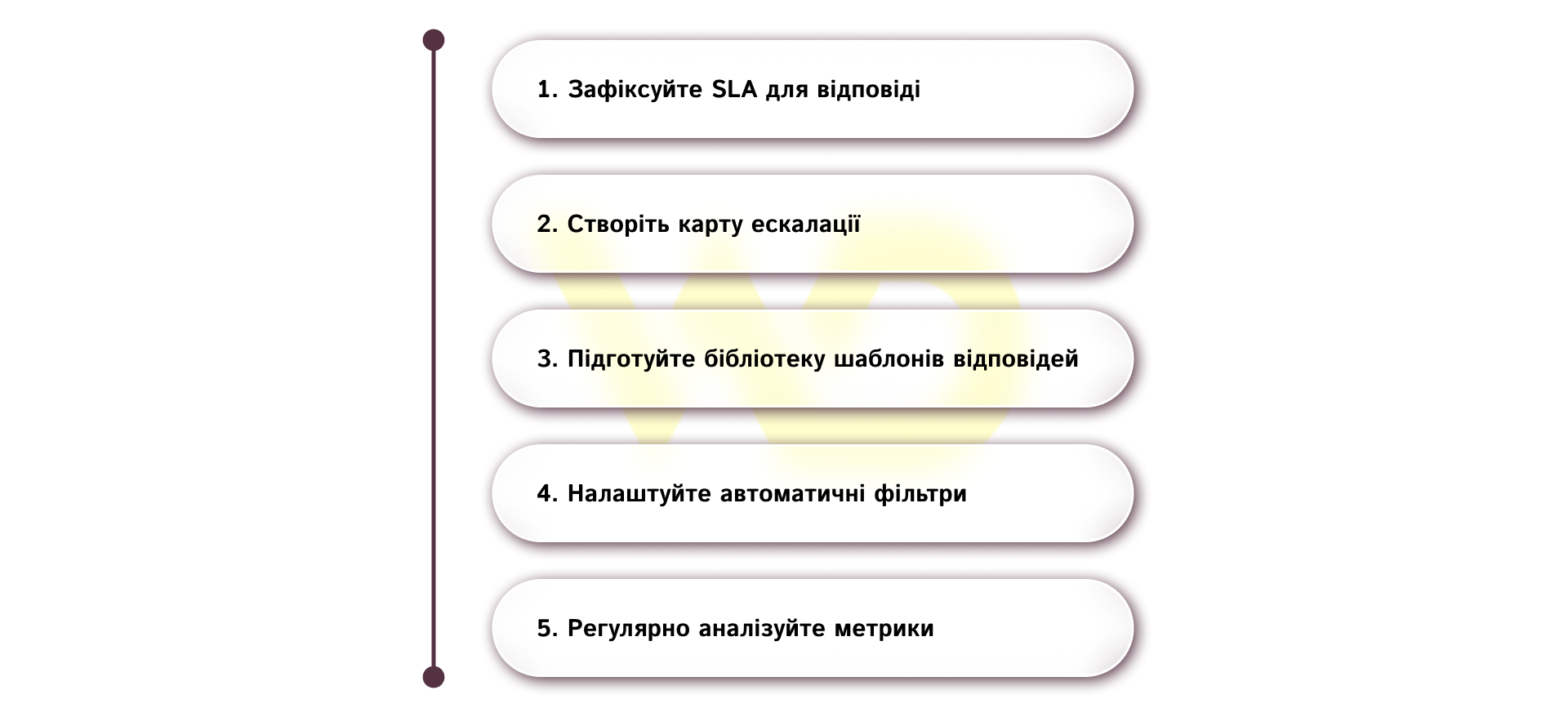

Practical tips for a brand launching moderation

It’s best to start not with a long list of rules, but with an audit of what’s already happening on social media. It’s worth analyzing which inquiries are most frequently repeated, where the team responds slowly, which topics generate the most emotion, and which platform tools can be activated right now. This allows you to build a moderation system around real-world scenarios, rather than theoretical assumptions.

Next, it’s time to move on to practical steps.

Effective moderation always combines standardized processes, technical tools, and live communication. It is this balance that helps a brand maintain responsiveness, manage risks, and create a positive experience when interacting with its audience.

14/05/2026

14/05/2026  929

929